RAG Is NOT Governance: The Limits to ‘Safer GenAI’

Most organizations today struggle with document and data governance. The structure and processes for document management systems, applications and data have required the level of planning and governance needed to define and implement high trust and compliance AI. The steps from personal productivity AI and analytics to an integrated structure and trusted organization starts with aligning outcomes and knowledge owners, which we explore further below.

RAG Is NOT Governance: The Limits to ‘Safer GenAI’

Most organizations today struggle with document and data governance. The structure and processes for document management systems, applications and data have required the level of planning and governance needed to define and implement high trust and compliance AI. The steps from personal productivity AI and analytics to an integrated structure and trusted organization starts with aligning outcomes and knowledge owners, which we explore further below.

Retrieval Improves Access, Not Truth

Retrieval Improves Access, Not Truth

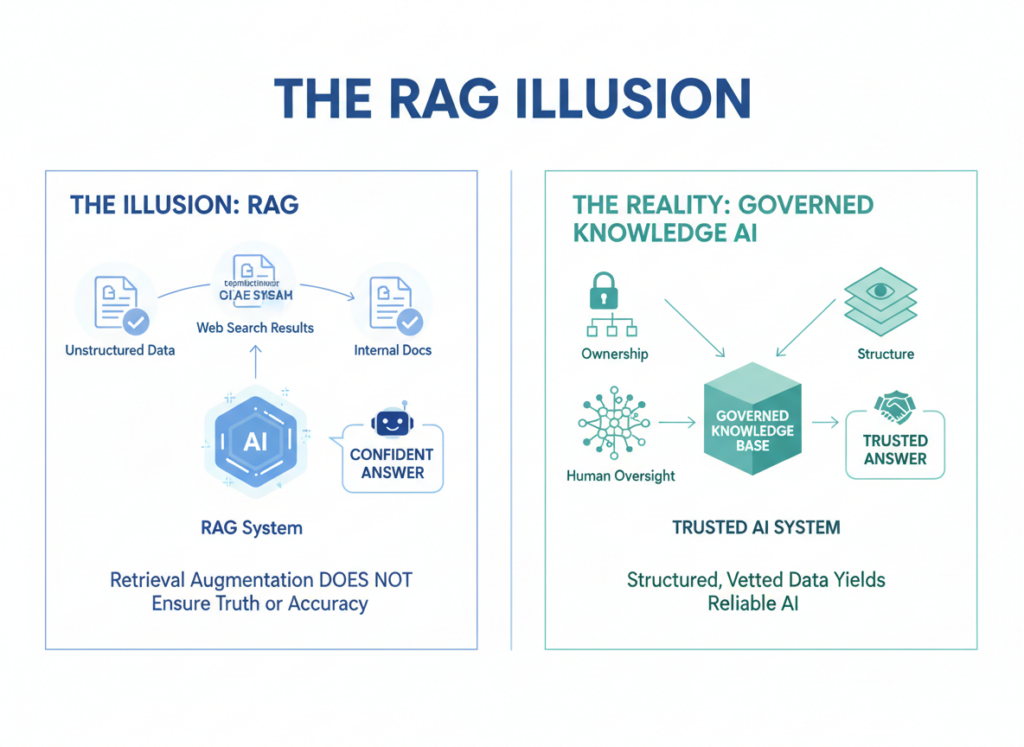

Retrieval-Augmented Generation has quickly become the default safety narrative for enterprise GenAI. By grounding responses in internal documents, RAG appears to reduce hallucinations and increase relevance. This leads many organizations to believe retrieval creates control. The simple truth is that we are getting closer to safer AI systems by doing so, but it is just not enough.

RAG retrieves information. It does not verify accuracy. It does not resolve conflicts between sources. It does not determine which version represents truth, or the brand’s preference. When documents disagree, RAG simply returns whichever chunk scores highest. The model sounds confident, but confidence is not correctness.

This distinction now matters operationally. A McKinsey report showed that 47% of organizations have already experienced negative consequences from GenAI. These systems were AI solutions, but in the Responsible AI category of solutions. Failures like these rarely originate from model behavior alone. They originate from weak knowledge foundations, and inadequate workflows, amplified by automation.

Document Quality Sets the Accuracy Ceiling

RAG accuracy is bounded by document quality and the effective selection of sanctioned documents. The quality ceiling is often far lower than leaders expect, for example. Most enterprises lack consistent document governance across departments. Ownership is unclear. Versions overlap takes place. Out of date information is not necessarily cleansed from the system. Definitions drift quietly over time.

Beyond that, business glossaries are incomplete or unused. Data catalogs exist but lack accountability. Domain ownership changes without controlled review. RAG cannot correct these problems. It faithfully retrieves what exists, regardless of quality.

This creates a dangerous illusion. Answers appear precise. They remain structurally unreliable. Executives feel this tension directly. PwC reports that 66% of CEOs cite trust concerns around AI adoption. Trust erodes when answers change without explanation, or are found to be inaccurate.

RAG accelerates access. Governance sustains confidence.

Writing for RAG Is a Governance Responsibility

Most enterprise documents were written only for humans. They assume shared context, informal structure, and implicit meaning. RAG changes that assumption. Documents now serve both people and machines.

Clear structure becomes mandatory. Headings define semantic boundaries. Chunking determines retrieval relevance. Consistent terminology enables reliable interpretation. Dense prose and complex tables reduce accuracy. Poor formatting hides intent.

Learning to write for RAG is not a technical trick. It is a governance discipline. It aligns directly with prompt governance and content stewardship. If humans struggle to follow structure, models will fail silently.

An aspect of governance that is occasionally missed, is intentionality. An AI system that is governed by a human knowledge manager, needs to be easy to use. It also needs to ensure the knowledge manager is selective of each document they put into a trusted repository (RAG system). They also need to review and clean it out from time to time, to ensure timeliness of the information.

This challenge is not theoretical. World Economic Forum reports that 86% of organizations expect AI to transform their business. Transformation without structured knowledge increases risk, not value.

Governance Enables Predictable Outcomes

Enterprises do not need creative AI for most workflows. They need predictable outcomes. Predictability and accuracy requires governance layers that define truth, ownership, escalation, and review.

Governance determines when generation is permitted. It defines how answers are labeled. It enables auditability and accountability. RAG alone cannot provide these guarantees. It retrieves content but cannot judge authority.

Organizations that invest in governance see measurable results. PwC found that companies with fewer AI trust concerns achieved higher shareholder returns. Trust is not philosophical. It is an economic factor.

This shift is now visible in leadership priorities. Deloitte reports data governance as the top concern for Chief Data Officers. Foundations are finally being addressed.

RAG Is a Tool, Not a Strategy

RAG has real value. It improves relevance. It reduces open-ended hallucination. Used correctly, it belongs inside a governed architecture.

But RAG is not governance. It does not define truth. It does not manage risk. It does not create accountability. Pointing RAG at poorly governed knowledge simply scales inconsistency faster. Enterprise governance requires human-in-the-loop systems, processes, and workflows.

The difference between success and failure is discipline. Governance establishes control before automation. It ensures GenAI operates within defined boundaries. RAG then becomes an accelerator, not a liability.

Here to help…

If accuracy matters, governance must lead. If outcomes matter, foundations come first.

Before expanding RAG, assess document maturity. Define ownership clearly. Enforce structure consistently. Establish authoritative sources of truth.

Then scale retrieval with confidence.

If your organization needs accurate, and predictable Responsible AI outcomes, let’s talk. At kama.ai, governance is not optional. It is the starting point.